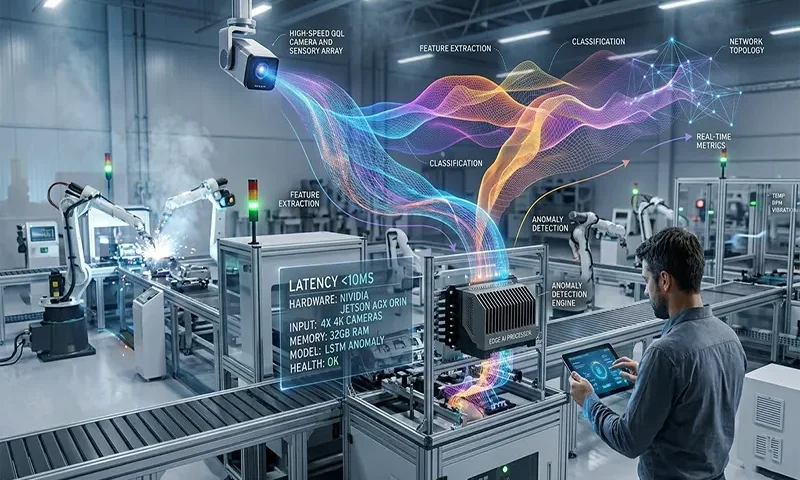

In the 2026 industrial landscape, the transition from “cloud-augmented” to “edge-autonomous” manufacturing is complete. Real-time anomaly detection—the ability to identify and respond to micro-deviations in production within milliseconds—now dictates the competitive edge. Achieving this requires a specialized hardware stack that balances raw throughput ($TOPS$) with extreme environmental ruggedization and ultra-low latency I/O.

The Physics of the Production Line

In modern high-speed manufacturing, “real-time” is no longer a marketing buzzword; it is a physical requirement defined by the speed of the assembly line. A beverage bottling plant operating at 1,200 units per minute allows for a window of less than $50ms$ to detect a structural flaw and trigger a pneumatic reject arm.

Cloud-based AI fails in this environment due to the Latent Jitter inherent in wide-area networks. Even with 5G integration, the round-trip time ($RTT$) for data transmission, combined with cloud inference variability, often exceeds the safety-critical thresholds. Consequently, anomaly detection has migrated to the “Heavy Edge”—dedicated hardware positioned within meters of the sensors, ensuring deterministic response times and total operational sovereignty.

Core Compute Requirements: The Architecture of Inference

The hardware heart of anomaly detection has shifted away from general-purpose CPUs toward heterogeneous architectures that prioritize parallel processing and specialized tensor operations.

Acceleration Architectures: GPUs vs. NPUs

As of 2026, the NVIDIA Blackwell-series MXM modules have become the gold standard for high-complexity vision tasks. These modules leverage fifth-generation Tensor Cores and FP4 precision, doubling the performance-per-watt compared to previous architectures. However, for specialized vibration and thermal analysis, integrated Neural Processing Units (NPUs) on SoCs (System on Chips) are increasingly used to handle time-series data with negligible power draw.

The TOPS Metric

Total Operations Per Second ($TOPS$) is the primary benchmark for edge selection.

- Basic Monitoring: Hardware providing $20–50\ TOPS$ is sufficient for multi-sensor fusion (temperature, sound, and pressure).

- High-Resolution Vision: Real-time 4K defect inspection requires $150–400+\ TOPS$ to process frames without dropping data.

Memory and Storage

Latency is often a factor of memory bottlenecking rather than raw compute. LPDDR5x memory with a minimum bandwidth of $270\ GB/s$ is required to feed high-resolution video streams to the GPU. Furthermore, NVMe Gen5 storage is essential for local “black box” logging, allowing the system to record the $500ms$ of high-frequency data preceding an anomaly for forensic analysis.

Connectivity and Data I/O: The Digital Nervous System

An Edge AI node is only as effective as the data it can ingest. Hardware for 2026 must support a diverse array of industrial I/O:

- Vision Interfaces: Dual 10GbE (GigE Vision) or USB 3.2 Gen 2×2 ports are standard for connecting high-speed industrial cameras.

- Time-Sensitive Networking (TSN): Hardware must support IEEE 802.1Qbv standards. TSN ensures that AI-generated “stop” commands receive priority over general telemetry data on the factory network, guaranteeing that an anomaly detection signal reaches the PLC (Programmable Logic Controller) in $<1ms$.

- Legacy Integration: Integrated, isolated RS-485 and CAN bus ports remain critical for extracting telemetry from older robotic arms and CNC machines.

Environmental and Ruggedization Standards

Manufacturing floors are hostile environments for traditional electronics. Hardware must be “Industrial-Grade,” defined by:

- Thermal Management: Fanless designs are mandatory to prevent the intake of metallic dust or oil mist. This requires advanced heat-pipe cooling systems capable of operating in ambient temperatures from $-20°C$ to $70°C$ without thermal throttling.

- Vibration and Shock: Compliance with MIL-STD-810G is necessary for units mounted directly on robotic gantries or heavy presses.

- IP Ratings: At a minimum, IP65-rated enclosures (dust-tight and water-jet protected) are required for food and beverage or chemical processing environments.

Power and Efficiency Constraints

The 2026 factory operates under strict “Green Manufacturing” quotas. High-performance Edge AI must be energy-efficient. Modern modules like the NVIDIA DGX Spark or Advantech SKY-MXM series aim for a Thermal Design Power ($TDP$) of $100W$ to $150W$ while delivering Petaflop-scale FP4 performance. This efficiency is critical not just for energy costs, but to minimize the heat signature of the device in enclosed cabinets.

Hardware-Aware Model Optimization

Hardware is only half the battle. To meet real-time requirements, models must be “compiled” for the specific edge silicon:

- Quantization: Converting models from $FP32$ to $INT8$ or $FP4$ reduces the memory footprint and increases inference speed by $3x–5x$ with minimal accuracy loss.

- Compilers: Utilization of NVIDIA TensorRT or Intel OpenVINO allows the software to take advantage of specific hardware instructions, such as hardware-accelerated de-noising for sensor data.

Hardware Profile Comparison Table

| Feature | Light Anomaly Detection | Heavy Vision Inspection |

| Primary Data | Vibration, Temp, $Hz$ | 4K Video, Hyperspectral |

| Target Compute | $30–60\ TOPS$ | $200–1000\ TOPS$ |

| Accelerator | Integrated NPU / entry GPU | High-end Blackwell MXM / DPU |

| Memory | $8GB–16GB$ LPDDR5 | $32GB–128GB$ LPDDR5x (Unified) |

| I/O Priority | Modbus, OPC UA, CAN | GigE Vision, TSN, WiFi 7 |

| Typical Latency | $<10ms$ | $<100ms$ (End-to-End) |

| Form Factor | DIN-Rail Mount | Rugged Industrial PC / Workstation |

Summary: The Autonomous Edge

As we move deeper into 2026, the hardware requirements for anomaly detection have converged toward high-density, low-power, and ultra-ruggedized “Super-Edge” nodes. By prioritizing TOPS-per-watt and TSN-ready connectivity, manufacturers can move beyond reactive maintenance into a state of Adaptive Autonomy, where the hardware itself prevents failures before they manifest as downtime.